Every year, Embedded World in Nuremberg gathers industry and technology leaders, engineers, and researchers to examine where embedded technology is heading. This year’s keynote, “Learning from the Octopus: Nature’s Blueprint for Intelligence Everywhere,” sets the direction clearly: intelligence is moving to the edges, where decisions are made close to the data, in real time, with no room for latency or failure.

The octopus challenges our traditional view of intelligence. Rather than being concentrated in a single control center, intelligence is distributed across its arms, enabling autonomous action while remaining coordinated as a whole. Modern systems face a similar shift. For decades, progress meant more powerful central processors. Today, central intelligence is no longer enough. As real-time demands intensify, advantage lies in how intelligence is embedded, distributed, and orchestrated across devices, networks, and infrastructure.

This shift comes amid a historic semiconductor boom. According to Deloitte, roughly 1.05 trillion chips were sold in 2025 at an average price of $0.74. Yet, a fraction of AI accelerators, representing less than 0.2% of total volume, now drive about half of industry revenue.

As real-world decisions increasingly require compute at the edge, the implication is clear for embedded systems. As enterprises expand on-premises and edge AI to address latency, sovereignty, and resilience, intelligence must move closer to the device layer.

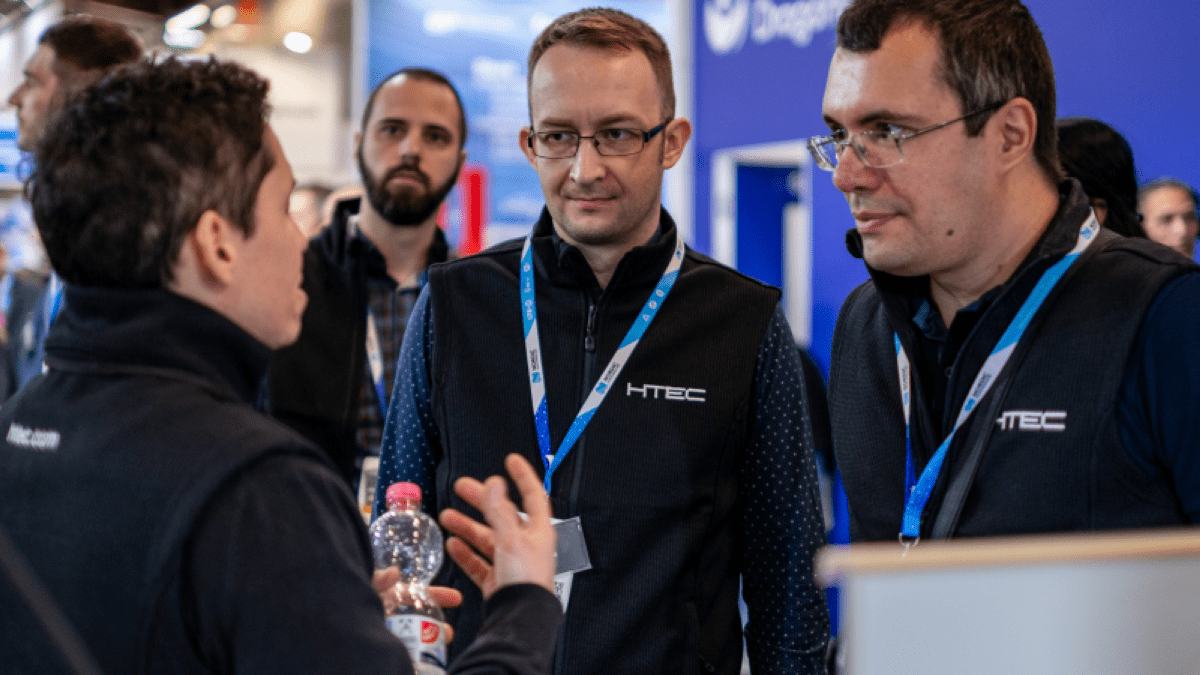

HTEC has spent years building at exactly this intersection, turning embedded complexity into deployable, production-grade solutions across industries. At Embedded World, that expertise will be on full display.

Below, we explore the trends set to shape the event, as well as the solutions HTEC will be demoing live for visitors.

Bringing Multi-Modal AI to the Edge

With our strategic partner SiMa.ai, we’ll be demoing how multi-modal AI workloads run efficiently on edge hardware using the Modalix MLSoC™ platform. The live demo showcases standalone generative AI in embedded systems, with large multi-modal models deployed directly on-device, enabling Physical AI applications without cloud dependency.

Visitors will be able to see how voice, text, and live camera inputs are processed in real time through an intuitive interface. At the core is an architecture optimized for highly efficient AI inference, delivering fast response times and ultra-low latency (time-to-first-token of 0.37 seconds for speech/text).

Built around Google’s Gemma 3 Large Multi-Modal Model (4B parameters), the innovative MLSoC™ platform combines SiMa.ai’s purpose-built AI hardware with HTEC’s production-grade AI chip software expertise, enabling efficient, low-latency deployment across Physical AI applications, from smart vision and intelligent video analytics to autonomous mobile robots.

The Rise of Physical AI: Robotics Moves from Controlled Environments to the Real World

IoT platforms, connectivity solutions, and applications are among the focal topics at Embedded World 2026, and robotics sits at the intersection of nearly all of them. Today’s robotic systems are less mechanical devices and more distributed embedded systems — integrating sensor arrays, real-time processing, vision pipelines, and wireless communication in a coordinated whole. What makes this moment an inflection point is that AI is finally enabling practical perception and situational awareness within compact, power‑constrained packages.

In partnership with Infineon Technologies AG, we’ll be showcasing a live demo built on this trend: the 360° Awareness Humanoid Robotic Head. Featuring Infineon’s XENSIV™ 60 GHz radar for precise spatial awareness, REAL3™ Time-of-Flight sensors for depth perception, and XENSIV™ high-performance digital MEMS microphones for intelligent audio recognition, the system combines heterogeneous sensing modalities within a unified embedded architecture. Combined with HTEC’s embedded AI and sensor-fusion software, the system demonstrates how multi-modal sensing and real-time processing can enable the robotic head to create a seamless, multi-modal understanding of its environment.

Connected Health: Embedded Intelligence, Safety, and Cybersecurity

Healthcare is one of the most demanding contexts for embedded development. As care increasingly extends beyond clinical walls into homes and remote settings, the embedded layer becomes the critical link between patient and provider.

Medical devices represent the most demanding test case for embedded development. They must be certifiably safe, compliant, increasingly connected, and, under growing regulatory pressure, cybersecure by design. At the Embedded World conference, HTEC will be demoing two devices – a telehealth solution for arrhythmia detection we developed with Humeds, and a connected elder-care system we created with Aloe Care.

Together, they demonstrate how IoT connectivity, real-time biosignal processing, multi-sensor integration, and edge analytics can enable continuous monitoring and timely intervention, while meeting the reliability, power, and security constraints of medical-grade embedded systems.

Connect with HTEC in Nuremberg

The embedded industry has never been short of ambition. What’s different now is the convergence – AI silicon capable of running complex multimodal workloads at the edge, physical systems that can perceive and act in real time, and cybersecurity that is embedded by design rather than treated as an afterthought. Across our demos, that convergence is the common thread, and our focus is on what it takes to turn it into reality: production software for AI chips that covers the full journey from AI chip to shipped product.

Find us at Hall 5, Booth 5 – 145 or get in touch to explore how we can help you translate silicon into a deployable, production‑grade platform.